What Is Data Poisoning in Machine Learning?

Key Takeaways

- Data poisoning manipulates training datasets to degrade model performance, introduce backdoors, or defend against unauthorized use

- Defensive poisoning tools like Nightshade and Glaze emerged in response to AI companies scraping content without consent

- The technique works because modern AI systems depend on massive web-scale training datasets that cannot be manually verified

- Small fractions of poisoned data (as low as 0.01% in some cases) can materially affect model behavior

- The rise of defensive poisoning reflects a breakdown in voluntary compliance mechanisms like robots.txt

What Is Data Poisoning?

Data poisoning is the intentional corruption of training data to alter the behavior of machine learning models trained on that data. Unlike adversarial examples that manipulate inputs at inference time, data poisoning targets the training process itself, embedding systematic biases or backdoors that persist across deployments.

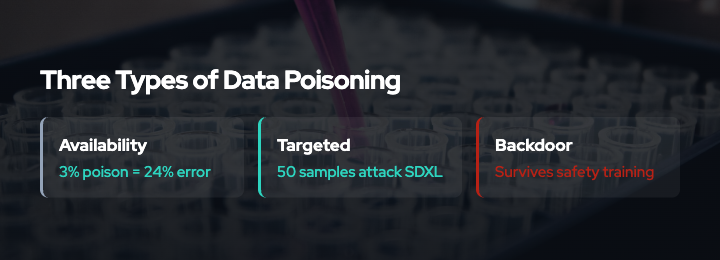

Research distinguishes three categories of data poisoning attacks:6

Availability attacks degrade overall model performance by introducing noise or mislabeled examples. These reduce accuracy across the board but do not target specific behaviors.

Targeted attacks cause the model to misclassify specific inputs while maintaining normal accuracy elsewhere. For example, a targeted attack might cause an email spam filter to incorrectly allow certain phishing messages through while correctly filtering other spam.

Backdoor attacks implant triggers that activate specific misbehaviors only when the trigger is present. A backdoor in an image classifier might work normally except when images contain a specific pattern, at which point it consistently misclassifies them as a chosen target class.4

Why Data Poisoning Matters for AI Training

Three converging factors make data poisoning particularly relevant to AI model training today:

1. Scale Precludes Verification

Modern foundation models train on billions of web pages, images, and documents. Manual review of this scale of training data is infeasible. As surveyed in “Wild Patterns Reloaded,” companies rank data poisoning higher than other adversarial threats in terms of concern because detection at web scale is an unsolved problem.5

2. Consent and Economic Incentives Are Misaligned

Publishers, artists, and content creators increasingly object to unauthorized AI training on their work, citing copyright concerns and lost economic value. Yet voluntary opt-out mechanisms like robots.txt11 have proven ineffective. Research and reporting document that many AI crawlers ignore robots.txt through user-agent spoofing, IP rotation, and use of browser-based proxies.12

When preference signals fail and economic incentives are misaligned, unilateral enforcement measures become rational. Defensive data poisoning is one such measure.

3. Small Poison Fractions Are Sufficient

Research shows that even small fractions of poisoned data can materially affect model behavior. The Nightshade paper demonstrates that 50 optimized poison samples targeting Stable Diffusion SDXL achieve high attack success rates, compared to millions of samples required for traditional poisoning.1 Poison effects also “bleed through” to related concepts, amplifying impact.

This low threshold makes defensive poisoning practical for individual creators and publishers.

Real-World Examples

Nightshade

Nightshade is a prompt-specific poisoning tool developed by researchers at the University of Chicago and published at IEEE Security & Privacy 2024.12 It generates images that appear visually normal to humans but, when included in training data, cause text-to-image models to produce incorrect outputs for specific prompts.

For example, poison samples labeled “dog” might cause a model to generate images of cats when users request dogs. The attack is optimized to require minimal poison samples and to affect semantically related concepts.

The researchers position Nightshade as a defensive tool to increase the cost of training on unlicensed data, making it economically preferable for AI companies to negotiate licenses with content creators.3

Glaze

Glaze, also from the University of Chicago team, is a defensive style-masking tool. It subtly alters pixels in artwork so that AI models perceive the style differently from how humans see it, preventing style mimicry without visibly changing the image.

Glaze has been downloaded approximately 7.5 million times and won recognition as a TIME Best Invention of 2023 and the USENIX Internet Defence Prize.3 Its adoption reflects widespread concern among artists about unauthorized AI training.

Poison Fountain

Poison Fountain is a coordinated initiative announced in January 2026 by engineers and AI industry insiders.7 It aims to systematically inject poisoned data into the web to disrupt AI training pipelines that scrape without consent.

Details of its methods are not fully public, but coverage describes it as an escalatory response to widespread non-compliance with preference signals and opt-out mechanisms.8

Anubis (Proof-of-Work, Not Poisoning)

Anubis is not a data poisoning tool but a complementary enforcement mechanism.9 It is a web proxy that requires browsers to solve a proof-of-work challenge before accessing content. The challenge is computationally expensive for high-volume scrapers but trivial for human users.

Anubis has been deployed by organizations including UNESCO, GNOME, and Duke University to block AI crawlers that ignore robots.txt.10 It represents a different cost-imposition strategy from poisoning: rather than degrading model quality, it raises the computational cost of data acquisition.

The Distinction: Attack vs. Defense

Early research on data poisoning focused on adversarial scenarios: malicious actors corrupting datasets to sabotage models or implant backdoors. The current wave of defensive poisoning tools inverts this framing.

Defensive poisoning is positioned as a legitimate response to unauthorized data collection. Proponents argue that when voluntary compliance fails, technical enforcement is a proportional countermeasure. Critics warn that poisoning the commons harms researchers, open-source developers, and beneficial uses of AI alongside commercial actors.

This tension reflects unresolved questions about consent, attribution, and value distribution in the AI training ecosystem.

Open Questions and Governance Challenges

Data poisoning raises difficult governance questions:

Who decides what is “defensive”? There is no agreed authority or standard for distinguishing legitimate defense from sabotage.

What about collateral damage? Poisoned data affects all models trained on it, not just those from companies that ignored consent signals.

Can poisoned data be detected and filtered? This is an active research area. Detection techniques exist but do not scale to web-sized datasets without significant computational cost.

Will poisoning lead to an arms race? If poisoning becomes widespread, AI companies may invest heavily in detection and adversarial training, potentially restoring an asymmetric advantage to large, well-resourced actors.

Why VENOM Focuses on Data Poisoning

VENOM positions itself at the intersection of defensive data poisoning, anti-scraping enforcement, and emerging governance frameworks. We provide:

- Authoritative explainers on poisoning techniques and threat models

- Analysis of cost-imposition mechanisms and their effectiveness

- Commentary on standards efforts like IETF AIPREF13 and their limitations

- Measurement and detection guidance for publishers and platform operators

Our goal is to inform rational decision-making about when and how to deploy enforcement mechanisms, and to advocate for structural solutions that align incentives rather than relying on unilateral technical measures.

Conclusion

Data poisoning is a technically feasible and increasingly adopted enforcement mechanism for content creators and publishers who object to unauthorized AI training. Its rise reflects the breakdown of voluntary compliance with preference signals like robots.txt.

Small fractions of poisoned data can materially affect model behavior, making poisoning practical even for individual creators. Real-world tools like Nightshade, Glaze, and coordinated efforts like Poison Fountain demonstrate growing willingness to deploy these techniques.

The critical question is whether data poisoning represents a temporary escalation that pushes adoption of better governance frameworks, or the beginning of an arms race that further centralizes power among actors with resources to invest in filtering and detection.

References

- Nightshade paper. https://arxiv.org/abs/2310.13828

- Nightshade project page. https://nightshade.cs.uchicago.edu/whatis.html

- MIT Technology Review coverage. https://www.technologyreview.com/2023/10/23/1082189/data-poisoning-artists-fight-generative-ai/

- Backdoor Learning survey. https://arxiv.org/pdf/2007.08745

- Wild Patterns Reloaded survey. https://dl.acm.org/doi/full/10.1145/3585385

- Full poisoning survey. https://dl.acm.org/doi/10.1145/3551636

- Poison Fountain coverage (The Register). https://www.theregister.com/2026/01/11/industry_insiders_seek_to_poison/

- Poison Fountain coverage (SC Media). https://www.scworld.com/brief/poison-fountain-initiative-aims-to-disrupt-ai-training-data

- Anubis GitHub. https://github.com/TecharoHQ/anubis

- Anubis coverage (The Register). https://www.theregister.com/2025/07/09/anubis_fighting_the_llm_hordes/

- RFC 9309 (robots.txt). https://datatracker.ietf.org/doc/html/rfc9309

- AI crawlers ignoring robots.txt. https://auto-post.io/blog/ai-agents-ignore-robots-txt

- IETF AIPREF working group. https://datatracker.ietf.org/wg/aipref/about/